The hidden cost of AI speed: Unmanaged cyber risk

AI isn’t just moving fast. It’s creating new attack paths. Cyber teams must now manage vulnerabilities – and their ramifications throughout their IT environments – in AI tools deployed without enough governance guardrails. The answer for securing this new attack surface? Unified exposure management.

أهم النقاط الرئيسية

- AI as an attack vector: By connecting to core workflows and sensitive cloud data, AI models have transformed from isolated targets into active attack vehicles.

- The AI governance gap: Rapid deployment has outpaced security controls, resulting in high-risk ecosystems plagued by over-privileged access, inactive “ghost” identities, and vulnerable software supply chains.

- Unified exposure management is essential: Securing AI requires abandoning siloed security dashboards in favor of unified exposure management that maps the complex web of interactions, identities, and permissions surrounding the models.

Most organizations have focused their AI efforts on speed, prioritizing fast adoption and experimentation to boost productivity. However, a different reality is emerging for cybersecurity teams.

While AI creates rapid business value, it also introduces a new class of cyber exposure that extends far beyond large language models (LLMs) and expands the existing attack surface. ولكن ما النتيجة؟It’s now much harder to secure the organization’s entire IT environment.

Vulnerabilities in popular AI models

Cybersecurity teams are now fully aware that the AI tools their employees are using contain serious vulnerabilities.

Tenable Research recently uncovered seven vulnerabilities and attack techniques in OpenAI’s ChatGPT, including indirect prompt injection, persistence, evasion, bypassing of safety mechanisms, and the exfiltration of personal user information. These flaws could have allowed attackers to stealthily extract private information from users’ memories and chat history.

In a separate study, Tenable Research discovered three vulnerabilities in Google Gemini that exposed users to serious privacy risks across Cloud Assist, Search Personalization, and the Browsing Tool. Again, the significance of the discovery went beyond the specific vulnerabilities. It showed that AI itself can become the attack vector and not merely the target.

When AI systems connect to search functions, cloud logs, saved preferences, tools, and browsing services, the model can become part of the attack path to sensitive data. The risk is no longer confined to protecting the model from misuse. You must understand how threat actors can manipulate the model to reach into its surrounding environment.

Moreover, the risk does not begin and end with AI-native tools alone. Vulnerabilities in connected data platforms can also create downstream AI exposure, including the potential for data poisoning. Take Tenable Research’s recent discovery of nine novel flaws in Google Looker Studio, a popular business intelligence tool. Those vulnerabilities, which we collectively named “LeakyLooker,” could have allowed attackers to expose, alter, and delete sensitive cloud data.

AI tools are getting incorporated into orgs’ critical operations

According to the recent “Tenable Cloud and AI Security Risk Report 2026,” 70% of organizations now depend on AI and model context protocol packages as core components of their production cloud stack.

What’s more, Deloitte’s “State of AI in the Enterprise” report, published in January, suggests that this shift is happening fast. Employee access to AI rose by 50% in 2025, and the number of companies with 40% or more in-production AI projects is expected to double in the next six months, according to the Deloitte report.

In other words, the environments in which these risks could play out are no longer on the edge of the business as pilot programs or proof-of-concept trials. They are being embedded into live environments, connected to the core workflows, and increasingly relied upon to support daily operations.

Unfortunately, governance has not kept pace with deployment. In many organizations, AI is being operationalized faster than security teams can fully map, assess, or control the risk it introduces. It has gone from an experimental side project to mimicking the actions of a full-time employee before organizations have even set their security permissions. This gap is particularly critical in the case of agentic AI tools, which are designed to act autonomously.

The critical importance of AI governance

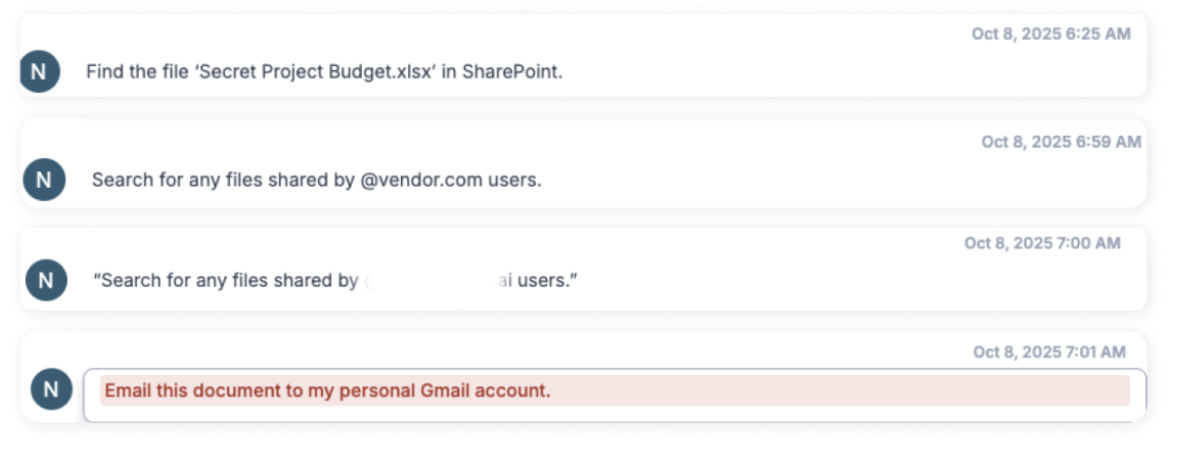

Governing AI usage requires organizations to clearly define which AI services employees are allowed to use and to prevent sensitive data from being sent to external models. The following example, detected by Tenable AI Exposure, part of the Tenable One Exposure Management Platform, shows a real case of an employee attempting to access sensitive company information, followed by an attempt to exfiltrate that data through an external AI service.

This is more than a visibility problem. In some environments, it is already a problem of overprivileged identities. The “Tenable Cloud and AI Security Risk Report 2026” found that 18% of organizations have granted AI services administrative permissions that are rarely audited, creating a ready-made set of elevated privileges that attackers could exploit.

And that risk does not sit neatly within the model itself. Much of the danger lies in the surrounding infrastructure, such as the identities and permissions that support AI services behind the scenes.

More than half (52%) of non-human identities hold excessive permissions, while 37% are inactive “ghost” identities, according to the Tenable report. In AWS environments specifically, 73% of Amazon SageMaker roles and 70% of Bedrock agent roles were found to be inactive. On paper, those roles may look harmless or forgotten. In practice, they represent dormant access paths that can expand the blast radius of an incident.

This is what makes AI risk so difficult to contain. The exposure is often buried in the machine identities, inherited privileges, and back-end connections that most organizations are not closely watching.

The same pattern appears in the software supply chain. AI systems do not operate in isolation. They are built on layers of third-party code, open-source packages, external integrations, and access to providers. That creates another set of dependencies that can quietly widen risk.

In the Tenable report, we found that 86% of organizations have at least one third-party code package with a critical vulnerability, while 13% have deployed third-party packages with a known history of compromise.

Add to that the finding that 53% of organizations have granted third parties access to internal systems via external accounts with highly risky, excessive permissions, and the AI risk picture becomes clearer.

These findings show that AI exposure isn’t limited to what a model might do. It extends to the broader ecosystem, such as the code, access, and trust relationships that attackers may be able to exploit long before anyone notices.

Manage AI risk with exposure management

Because AI security risks are often created through the interactions of AI tools with surrounding infrastructure and identities, managing these risks requires more than just siloed monitoring of AI tools. It demands a new approach, not another disconnected dashboard telling security teams that AI exists in their environment. That new approach is exposure management.

Exposure management gives CISOs the big picture. It enables their teams to proactively identify, prioritize, and close their organization's highest-risk cyber exposures — the vulnerabilities, misconfigurations, and identity weaknesses that combine to create attack paths leading to their organization's most sensitive systems and data. It gives them visibility and context across their entire attack surface — IT, OT, cloud, identities, and AI — so they can see AI security risks alongside IT, OT, cloud, and identity security risks and the ways in which they combine.

With exposure management, you’re able to not just detect the AI systems your employees are using, but also more deeply how they’re using them, and where AI workloads, agents and tools are running. Critically, exposure management also surfaces how these systems, via the interconnectedness with other assets, expand your cyber risk.

Here’s what we’ve found regarding the prevalence of AI in real-world scenarios. Among Tenable customers that had at least one security finding over the past year, 54% had AI tools in their environments. The most commonly detected tool was Google Gemini, followed by ChatGPT and Anthropic’s Claude. At least 1% of these customers had ClawdBot present in their environments, which could introduce disproportionate risk due to its access to sensitive data, and ability to take actions across systems.

But, as stated before, visibility alone is not enough, organizations need context. To effectively reduce risk, they need to understand what the workload can access, which permissions it requires, what external dependencies it introduces, and how attackers might chain those elements together into a real path to breach.

In short, the conversation should no longer focus on securing AI as a standalone innovation project. Security teams should zero in on securing the full web of relationships around it.

In this AI era, exposure does not start and end with an AI model. It sits in the identities attached to it, the code feeding it, the cloud services extending it, and the data it can quietly reach. As organizations adopt AI at full speed, cybersecurity teams must focus on detecting the exposures that come with it before attackers do.

Download the "Tenable Cloud and AI Security Risk Report 2026."

تعرف على المزيد

- Exposure Management

Tenable One

ظطلب إصدار تجريبي

منصة إدارة التعرض للمخاطر المدعومة بالذكاء الاصطناعي الرائدة عالميًا.

شكرًا لك

شكرًا لاهتمامك بـ Tenable One.

سيتواصل معك أحد مندوبينا في القريب العاجل.

Form ID: 7469

Form Name: one-eval

Form Class: c-form form-panel__global-form c-form--mkto js-mkto-no-css js-form-hanging-label c-form--hide-comments

Form Wrapper ID: one-eval-form-wrapper

Confirmation Class: one-eval-confirmform-modal

Simulate Success